AI is transforming research and development by shifting how organizations interact with scientific content — from reading individual documents to loading large datasets for machine-driven analysis. This change is reshaping how R&D teams access, manage, and govern research data.

There is a significant shift underway in how data and content are being used across research & development (R&D), fueled by the rapid rise of AI tools and new forms of interaction with information.

In many respects, what we are seeing is not surprising. Increased use of AI inevitably brings questions about sourcing data, concerns about scale, and a general sense of uncertainty as established patterns start to shift. What is different is how people are reacting: the variety of responses, the experimentation, and the shared feeling that, so far, no one has quite worked out a clear, sustainable way forward.

Underlying all of this is a growing hunger for data. More specifically, a hunger for content and datasets that can be fed into machines to unlock value from the use of AI tools across the R&D lifecycle.

One way to understand this shift is to look at how our consumption of scientific literature has evolved.

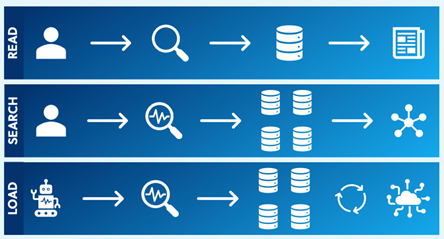

The most familiar use case is one we all recognize:

- READ: Users identify content through a preferred search tool and need access to the full text – via subscription, open access, or individual article purchase.

This is the world many of us still inhabit day to day, often through tools like PubMed or RightFind Enterprise.

A step beyond that is:

- SEARCH: Users want to explore a topic and search across multiple sources at once.

Here we move into aggregated discovery: connecting to multiple content and data sources simultaneously. This is the domain of tools such as RightFind Navigate, or broader deep-search services that crawl and index content available across the internet.

The emerging use case (arguably no longer “emerging,” but already very real) is:

- LOAD: Users identify content and datasets for large-scale computational analysis.

This is the new frontier. It is driven by automation, artificial intelligence, and the need to integrate data across systems rather than consume it one article at a time.

As access to powerful analytics and processing capabilities becomes more democratized, organizations face growing pressure to serve internal customers with datasets that are not only useful, but copyright compliant, supporting a move to responsible AI. This shift from human consumption to machine consumption is where many of today’s tensions are surfacing.

It is also where conversations across the industry are becoming strikingly consistent. We hear things like:

- “R&D are asking for all the data we have on a specific therapeutic area. In XML.”

- “I need lots of data, and I don’t yet know exactly what I’m looking for – just a broad therapeutic area.”

- “We have internal systems that need training. What data can I get in bulk to do that? And can I even use it to train an AI?”

Running alongside these requests is the thorny question of copyright and intellectual property. CCC has been running sessions on the copyright implications of AI, and while that topic deserves focused attention, it isn’t the core subject of this post.

What is worth noting, though, is how often these technical and data-driven questions are accompanied by a genuine desire to be compliant: to respect others’ IP, to stay within the scope of acquired rights, and to do things properly. At the same time, there is clear frustration about how difficult it can be to find an easy path to rights-cleared content that can be accessed quickly and at scale.

Taken together, this leaves us with a deceptively simple question:

“How do I get large quantities of rights-cleared content for machines to consume, in support of AI-driven use cases, and ideally do so in a way that is efficient and sustainable?”

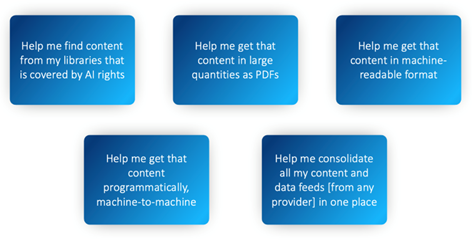

When we unpack that question, it tends to resolve into five common use cases:

- What do I already have? Help me understand what rights are available in content I already hold legally.

- How do I get it at scale as PDFs? I want to use this content to support AI tools, summarization, and evidence linking using RAG-based approaches.

- How do I get it at scale in machine-readable formats? Abstracts aren’t enough; I need full-text XML.

- How do I access it programmatically? I want machine-to-machine access so AI agents can find and consume data, and so my systems can interoperate.

- How do I simplify access? I want a single place to work across all the data I’m entitled to use, regardless of source or vendor.

These use cases are not going away. If anything, they are becoming more urgent as AI becomes embedded in everyday R&D workflows. The questions we now have to face are straightforward but challenging: how do we respond, how do we support our colleagues effectively, and how do we do so in a way that is scalable, compliant, and sustainable?

In the next post, we’ll look at the challenges these use cases expose and begin to explore some of the ways organizations might start to address them.